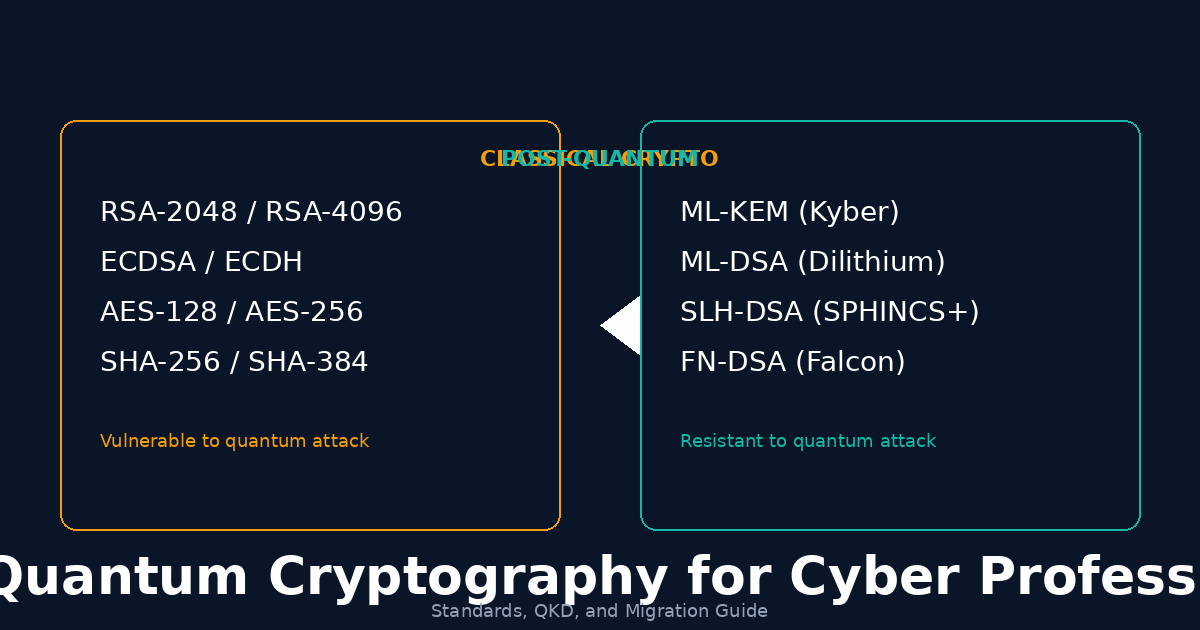

Post-quantum cryptography (PQC) has moved from academic conference papers to real-world standards in the span of just a few years. In August 2024, the U.S. National Institute of Standards and Technology (NIST) published its first three post-quantum cryptographic standards — ML-KEM, ML-DSA, and SLH-DSA — marking a turning point for cybersecurity. A fourth standard, FN-DSA, followed in 2025. These algorithms are designed to resist attacks from both classical computers and the quantum computers expected to emerge over the coming decades.

For cybersecurity professionals, this is not a distant concern. Adversaries are already collecting encrypted traffic today, banking on the ability to decrypt it once quantum hardware matures — a strategy known as harvest-now, decrypt-later. If your organization handles data with a confidentiality horizon of 10 years or more, the quantum clock is already ticking. This guide covers what PQC is, how it differs from quantum key distribution (QKD), what hybrid TLS looks like in practice, and how to build a migration roadmap for your infrastructure.

The Quantum Threat: Why It Matters Now

Current public-key cryptography relies on mathematical problems that are computationally hard for classical computers. RSA depends on the difficulty of factoring large integers. Elliptic curve cryptography (ECC) relies on the elliptic curve discrete logarithm problem. Both are vulnerable to two key quantum algorithms:

Shor’s Algorithm

Shor’s algorithm, published by Peter Shor in 1994, can factor large integers and compute discrete logarithms in polynomial time on a sufficiently powerful quantum computer. This directly breaks RSA, Diffie-Hellman, and all elliptic curve variants (ECDH, ECDSA, EdDSA). The implications are sweeping: TLS handshakes, code signatures, certificate authorities, S/MIME email encryption, VPN tunnels, SSH keys, and blockchain signatures all depend on these constructions.

Estimates for when a cryptographically relevant quantum computer (CRQC) capable of running Shor’s algorithm at scale vary, but most serious assessments place it somewhere between 2030 and 2040. Some researchers argue it could come sooner. The precise timeline is less important than the risk calculus: data encrypted today with RSA-2048 or ECDH P-256 could be decrypted in the future.

Grover’s Algorithm

Grover’s algorithm provides a quadratic speedup for unstructured search problems. Against symmetric cryptography, this effectively halves the key strength: AES-128 drops to 64 bits of security, AES-256 drops to 128 bits. The mitigation is straightforward — use AES-256 or switch to AES-256-GCM where you haven’t already — but it requires an audit to identify where AES-128 is still in use.

Harvest-Now, Decrypt-Later

This is the most immediate operational threat. State-sponsored adversaries and sophisticated threat actors are intercepting and storing encrypted communications today — TLS traffic, VPN sessions, encrypted databases, backup archives — with the expectation that quantum computers will eventually break the underlying cryptography. Any data with a required confidentiality period that extends past the estimated arrival of a CRQC is already at risk.

Regulated industries are particularly exposed. Healthcare records, financial transactions, government classified communications, and intellectual property with long commercial lifetimes all fall into this category. The U.S. National Security Agency’s CNSA 2.0 timeline requires PQC migration for national security systems by 2030, and many enterprises will follow a similar schedule.

NIST PQC Standards: The Core Algorithms

NIST’s Post-Quantum Cryptography Standardization Process ran from 2016 to 2024, evaluating dozens of candidate algorithms through multiple rounds of public scrutiny. The final selections fall into distinct categories, each designed for different cryptographic purposes.

ML-KEM (Module-Lattice-Based Key Encapsulation Mechanism)

Formerly known as CRYSTALS-Kyber, ML-KEM is a lattice-based key encapsulation mechanism. It is the designated replacement for RSA key exchange and ECDH in protocols like TLS, SSH, and IPSec. ML-KEM comes in three security levels: ML-KEM-512 (~NIST Level 1, lightweight), ML-KEM-768 (~NIST Level 3, recommended default), and ML-KEM-1024 (~NIST Level 5, highest assurance).

ML-KEM operates on structured lattices (module lattices), providing an excellent balance of security, key sizes, and computational performance. Public keys are approximately 1,184 bytes (ML-KEM-768) and ciphertexts are similar in size — notably larger than ECDH but manageable for modern networks.

ML-DSA (Module-Lattice-Based Digital Signature Algorithm)

Formerly CRYSTALS-Dilithium, ML-DSA is the primary post-quantum digital signature algorithm for general-purpose use. It replaces RSA signatures, ECDSA, and EdDSA in certificate signing, code signing, document authentication, and protocol authentication. ML-DSA offers three security levels (ML-DSA-44, ML-DSA-65, ML-DSA-87) with signatures ranging from roughly 2,420 to 4,595 bytes. ML-DSA has been rapidly adopted in libraries such as BoringSSL, OpenSSL 3.2+, and WolfSSL.

SLH-DSA (Stateless Hash-Based Digital Signature Algorithm)

Formerly SPHINCS+, SLH-DSA provides a conservative, hash-based alternative for digital signatures. Its security relies solely on the well-understood properties of hash functions rather than lattice problems, making it a valuable hedge against future breakthroughs in lattice cryptanalysis. The trade-off is size — SLH-DSA signatures range from roughly 7.8 KB to 49.9 KB depending on the parameter set. This makes it less suitable for bandwidth-constrained environments but ideal for high-assurance use cases.

FN-DSA (Fast-Fourier Lattice-Based Digital Signature Algorithm)

Formerly Falcon, FN-DSA is a lattice-based signature scheme based on the NTRU lattice problem. It produces compact signatures (roughly 666 bytes for the 512-bit parameter set) with strong security guarantees, but its implementation is significantly more complex than ML-DSA due to the use of floating-point arithmetic and Gaussian sampling. FN-DSA is recommended for applications where signature size is critical.

| Metric | ML-KEM-768 | ML-DSA-65 | SLH-DSA-128f | FN-DSA-512 |

|---|---|---|---|---|

| Public Key | 1,184 B | 1,952 B | 32 B | 897 B |

| Sig / Ciphertext | 1,088 B | 3,309 B | ~17 KB | 666 B |

| NIST Level | Level 3 | Level 3 | Level 1 | Level 1 |

| Best For | TLS, SSH, VPN | PKI, Code Signing | High-Assurance | Size-Critical |

PQC vs QKD: Key Differences and Where Each Fits

Quantum Key Distribution (QKD) and post-quantum cryptography are often conflated, but they are fundamentally different approaches to the quantum threat:

For most organizations, PQC is the right first step. QKD may have a role in specialized high-security environments — government networks connecting data centers, for example — but it does not replace the need for PQC. The two technologies are complementary, not competing. NIST, ENISA, and most national cybersecurity agencies recommend PQC as the primary migration path.

Hybrid TLS: ECDHE + ML-KEM in TLS 1.3

The practical deployment model for the next several years is hybrid cryptography — using both classical and post-quantum algorithms simultaneously. In TLS 1.3, this means combining ECDHE key exchange with ML-KEM in the key establishment phase.

supported_groups extension.Implementation Status

- Chrome shipped hybrid ML-KEM-768 + X25519 in TLS 1.3 starting in late 2024

- Firefox followed with hybrid support in early 2025

- OpenSSL 3.2+ and BoringSSL both support hybrid key exchange groups

- Cloudflare, AWS, and Microsoft have deployed hybrid TLS on production endpoints

- Hybrid cipher suites are tracked under IETF draft specifications

PKI Migration Challenges and Timelines

Public Key Infrastructure (PKI) is one of the most complex migration targets for PQC. Certificates, certificate authorities, intermediate chains, revocation mechanisms (CRLs, OCSP), and validation logic all need to be updated.

Key Challenges

- Certificate sizes: PQC public keys are significantly larger than classical keys. An ML-DSA-65 public key is approximately 1,952 bytes compared to 64 bytes for an Ed25519 public key. This increases certificate sizes, CRL sizes, and OCSP response sizes.

- Chain validation: Certificate chains with mixed classical and post-quantum signatures require updated validation logic. Transition periods will see hybrid certificates.

- Cross-certification: Organizations with complex trust relationships face coordination challenges. All parties in a trust chain must support the new algorithms.

- Hardware constraints: Smart cards, TPMs, HSMs, and embedded devices often have firmware-bound cryptographic capabilities requiring updates or replacement.

- Long-lived certificates: Some organizations operate certificates with 10-20 year validity periods that cannot be rotated on short notice.

Code Signing and Firmware Trust in a PQC World

Code signing is a high-priority migration target because signed binaries and firmware images often persist in the field for years — sometimes decades in industrial, medical, and automotive contexts. An adversary who can forge code signatures can distribute malicious updates that appear authentic.

The migration path for code signing involves:

- Adopting ML-DSA or FN-DSA as the primary signing algorithm for release artifacts

- Multi-signing — signing binaries with both classical (e.g., ECDSA) and post-quantum (e.g., ML-DSA) signatures during the transition period

- Updating build pipelines and CI/CD systems to use PQC-capable signing tools (sigstore, openssl 3.2+, custom HSM integrations)

- Firmware secure boot chains require particular attention because the root of trust is often burned into hardware

- Supply chain tooling such as SBOM generators, artifact attestation services (SLSA), and binary transparency logs must also support PQC signatures

Crypto Agility as a Strategy

Crypto agility is the ability of a system to switch cryptographic algorithms without requiring a complete redesign or redeployment. It is not a product you buy — it is an architectural property you build into your systems.

- Algorithm negotiation: Protocols should negotiate algorithms dynamically. TLS does this through cipher suite and supported_groups extensions. Your internal protocols should do the same.

- Key and certificate management: Automated certificate lifecycle management (ACM) with short-lived certificates reduces the cost of algorithm transitions.

- Modular cryptographic libraries: Use algorithm-agnostic interfaces. Instead of calling

ECDH_compute(), callKEM_encapsulate(algorithm, public_key)— where algorithm can be ECDH, ML-KEM, or a hybrid combination. - Inventory and visibility: Maintain a comprehensive inventory of every cryptographic algorithm in use across your infrastructure — libraries, protocols, HSMs, embedded devices, third-party dependencies.

- Standardized configuration: Use centralized configuration for algorithm preferences. If a vulnerability is discovered, update preferences in one place and propagate the change.

PQC Migration Roadmap for Organizations

A structured migration roadmap reduces risk and ensures resources are allocated efficiently. The following phased approach is recommended:

Conclusion

The transition to post-quantum cryptography is one of the most significant shifts in information security since the adoption of public-key cryptography in the 1970s. NIST has finalized the standards — ML-KEM for key exchange, ML-DSA for general-purpose signatures, SLH-DSA as a conservative hash-based hedge, and FN-DSA for size-critical applications. Major browsers, cloud providers, and cryptographic libraries have already begun deploying hybrid solutions.

The quantum threat is not hypothetical. Harvest-now-decrypt-later attacks are happening today against data that must remain confidential for years or decades. The organizations that will weather this transition are those that start with a cryptographic inventory, prioritize by risk, and begin deploying hybrid cryptography now — not when the first CRQC arrives, but while there is still time to migrate methodically.

Start with your TLS infrastructure, then move to PKI and code signing. Build crypto agility into every system you design. And remember: the question is no longer whether to migrate to post-quantum cryptography, but how quickly your organization can move. The quantum clock is ticking.

Key Takeaways

- The quantum threat is real and time-bounded. Harvest-now-decrypt-later attacks mean the clock started years ago. Data with long confidentiality requirements is already at risk.

- NIST has finalized the first PQC standards. ML-KEM (key encapsulation), ML-DSA (signatures), SLH-DSA (hash-based signatures), and FN-DSA (compact signatures) are ready for deployment.

- PQC is software — deploy it now. Unlike QKD, PQC runs on existing hardware and integrates into existing protocols. There is no infrastructure prerequisite.

- Hybrid deployment is the recommended approach. Combining classical and post-quantum algorithms provides defense in depth during the transition period.

- PKI and code signing are the highest-priority targets. Long-lived signatures and trust chains require the longest lead time to migrate.

- Crypto agility is not optional. Build it into your architecture so you can respond to future algorithmic changes without redesigning your systems.

- Start with a cryptographic inventory. You cannot migrate what you do not know about. Catalog every algorithm, key, and certificate in your environment.

- The migration is a multi-year program. Plan for phased deployment, testing, and monitoring across a 3-5 year timeline. Start today.